LVM provides flexibility to organize logical volumes without being bound to inflexible partitioning. If set-up correctly, the LVM can grow as the disk/raid might grow. To allow the LVM to grow, the block device needs to be added as a physical volume to LVM without any partitioning on it. When a partition of a block device is added as a physical volume, the partition will not allow the LVM to grow beyond the partition border.

LVM provides flexibility to organize logical volumes without being bound to inflexible partitioning. If set-up correctly, the LVM can grow as the disk/raid might grow. To allow the LVM to grow, the block device needs to be added as a physical volume to LVM without any partitioning on it. When a partition of a block device is added as a physical volume, the partition will not allow the LVM to grow beyond the partition border.

If a partition was added as physical volume, growing the block device is difficult. If the server is a VM, growing a disk is not a big deal. Increasing the logical volume by creating another partition on the disk and adding that to the LVM can get very messy at some point.

What is the solution?

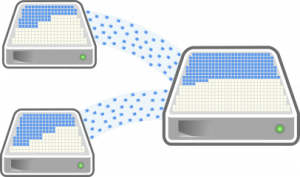

Assuming a VM that already has three disks: one being the operating system disk, and the other two in the LVM containing a partition as a data volume. Now the data volume needs to be increased again but the partition on the data disks prevents this. To get out of this mess, create in the hypervisor another disk with at least the size of the second and third disk together and add it to the VM.

As soon as the disk appears on the system, it can be added without a partition to the LVM using the following command.

$ pvcreate /dev/vdd Physical volume "/dev/vdd" successfully created.

This will add the disk as a “physical volume” without limitations imposed by partitions. This disk will be able to grow when its size is increased in the hypervisor.

The additional physical volume, called /dev/vdd in the example above, can now be added to the volume group of the data volume, called “datavg” in the example.

$ vgextend datavg /dev/vdd Volume group "datavg" successfully extended

With the volume group extended, the new physical volume /dev/vdd is not yet used. The space that the /dev/vdd physical volume added to the volume group could be used, but has not been assigned to any logical volume.

How to cleanup the mess?

To consolidate the three disks into one, the data on the two disks containing the partitions (/dev/vdb and /dev/vdc) needs to be moved to the disk containing no partition (vdd). The space of the volume group is distributed across the disks in units of so called “extents”. The output of “pvs” shows nicely how those extents are distributed across the physical volumes.

$ pvs PV VG Fmt Attr PSize PFree /dev/vda2 rootvg lvm2 a-- 9,75g 4,75g /dev/vdb1 datavg lvm2 a-- <5,00g 0 /dev/vdc1 datavg lvm2 a-- <5,00g 0 /dev/vdd datavg lvm2 a-- <15,00g <15,00g

As clearly indicated, the physical volumes /dev/vdb and /dev/vdc are used entirely while the physical volume /dev/vdd is part of the volume group but still “empty”.

To move all used extents from the physical volumes to /dev/vdd, pvmove(8) is used. This command allows to move the allocated physical extents (PEs)

$ pvmove /dev/vdb1 /dev/vdd /dev/vdb1: Moved: 0,08% /dev/vdb1: Moved: 6,72% /dev/vdb1: Moved: 11,18% /dev/vdb1: Moved: 15,40% /dev/vdb1: Moved: 19,47% /dev/vdb1: Moved: 23,85% /dev/vdb1: Moved: 27,91% /dev/vdb1: Moved: 32,13% /dev/vdb1: Moved: 36,36% /dev/vdb1: Moved: 43,16% /dev/vdb1: Moved: 47,62% /dev/vdb1: Moved: 52,23% /dev/vdb1: Moved: 56,92% /dev/vdb1: Moved: 61,61% /dev/vdb1: Moved: 66,22% /dev/vdb1: Moved: 70,91% /dev/vdb1: Moved: 75,84% /dev/vdb1: Moved: 85,07% /dev/vdb1: Moved: 89,52% /dev/vdb1: Moved: 93,82% /dev/vdb1: Moved: 98,20% /dev/vdb1: Moved: 100,00%

The first argument defines the source physical volume the extents should be moved away from. The second argument specifies the destination physical volume to move the extents to. If the destination is not specified, the extents will be distributed across the physical volumes of the volume group. It this case there would not be a difference as there is no space anywhere else.

$ pvmove /dev/vdc1 /dev/vdd /dev/vdc1: Moved: 0,00% /dev/vdc1: Moved: 4,69% /dev/vdc1: Moved: 8,21% /dev/vdc1: Moved: 12,74% /dev/vdc1: Moved: 16,81% /dev/vdc1: Moved: 100,00%

When the second physical volume’s extents are moved, the destination physical volume does make a difference. As the /dev/vdb physical volume is empty already, the extents would be distributed between the /dev/vdd and the /dev/vdb if the second argument would be omitted.

$ pvs PV VG Fmt Attr PSize PFree /dev/vda2 rootvg lvm2 a-- 9,75g 4,75g /dev/vdb1 datavg lvm2 a-- <5,00g <5,00g /dev/vdc1 datavg lvm2 a-- <5,00g <5,00g /dev/vdd datavg lvm2 a-- <15,00g 5,00g

Listing the physical volumes now, shows that all extends have been moved to /dev/vdd.

![]() The process of moving the extents can be interrupted at any time using the following command in a separate terminal.

The process of moving the extents can be interrupted at any time using the following command in a separate terminal.

$ pvmove --abort

This will stop all running pvmove operations. The pvmove command will move one extent after the other (if –atomic is not given). When the operation is aborted, already moved extents will be on the new physical volume while extents not yet moved stay on their old physical volume. The stored data on the volumes remains intact. The pvmove operation can be continued at any time.

The now unused physical volumes /dev/vdb1 and /dev/vdc1 can be removed from the lvm. To do so, the physical volume needs to be removed from the volume group.

$ vgreduce datavg /dev/vdb1 Removed "/dev/vdb1" from volume group "datavg" $ vgreduce datavg /dev/vdc1 Removed "/dev/vdc1" from volume group "datavg"

The vgreduce, removes the physical volume from the volume group. This can only be done if there is no extent used from that physical volume.

$ pvremove /dev/vdb1 Labels on physical volume "/dev/vdb1" successfully wiped. $ pvremove /dev/vdc1 Labels on physical volume "/dev/vdc1" successfully wiped.

As the physical volume is not part of any volume group, it can also be removed as physical volume. Doing so will not list the disks /dev/vdb1 and /dev/vdc1 as part of any volume group or as physical volume.

$ pvs PV VG Fmt Attr PSize PFree /dev/vda2 rootvg lvm2 a-- 9,75g 4,75g /dev/vdd datavg lvm2 a-- <15,00g 5,00g $ vgs VG #PV #LV #SN Attr VSize VFree datavg 1 1 0 wz--n- <15,00g 5,00g rootvg 1 2 0 wz--n- 9,75g 4,75g

The disks can now be removed or used for something else, as they are not part of the lvm configuration any longer.

Read more of my posts on my blog at https://blog.tinned-software.net/.